Cloud native applications: a primer

At times, IT departments can get so large and influential it can become tempting to believe in the fallacy that the IT department has a right to exist in itself. But here’s the thing:

- unless you work in a real tech company, the only raison d’être for IT is to support the business, and more specifically to support business applications

- the business doesn’t care how you set up your infrastructure, as long as they get the apps they want, and as long as they work

App evolution

In the old days in order to run and support one application, you needed to add a new wing to your datacenter, insert tons of equipment and staff it with greasemonkeys to keep it running. Clearly, this was less than ideal as applications had strong dependencies on hardware and Operating System (OS). This meant developers couldn’t just focus on adding value for the business but instead had to spend lots (if not most) of their time on worrying about the hardware, infrastructure and OS.

As technology evolved, we have seen a movement to get rid of those dependencies and let development become as app centric as possible:

- virtualization has allowed us to run applications without physical hardware dependencies

- standardization and abstraction made sure we could worry about just one type of virtual hardware in the enterprise (x86, LUN/NFS storage)

- virtual machine languages (Java/.NET) and run anywhere languages (javascript, python) have abstracted the remaining hardware (x86) and OS dependencies away

Platforms & deployment

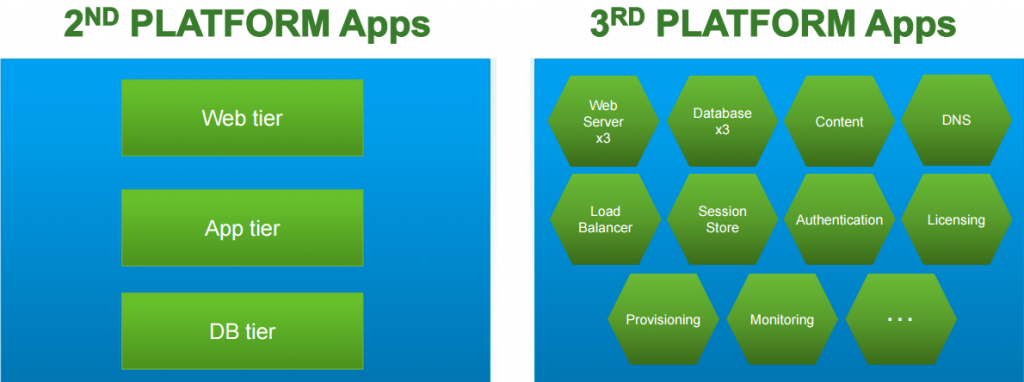

The same kind of movement has taken place for app deployment: where the first applications were identified by the mainframe they ran on, the later (2nd) generation apps used a client-server scale up platform, where we now see a movement to scale out cloud applications.

Second and third generation platform applications.

Second and third generation platform applications.

These 3rd generation applications or cloud native applications are characterized by being independent of the hardware and OS, built to scale, built for failure, and running as Software-as-a-Service (SaaS) on a highly automated - 3rd generation - platform.

Containers

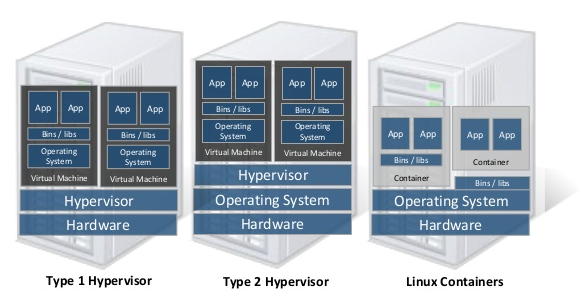

Containers are the common unit of deployment for cloud native applications, and can be seen as Operating System level virtualization: where virtual machines share the hardware of their hosting physical machine, containers share the OS core (kernel) of their hosting (virtual) machine. Therefore, containers provide a very lightweight deployment mechanism as well as OS level isolation enabling scale out of new instances in seconds (compared to minutes for VMs). In practical terms, a container is the application itself, bundled together with any libraries it requires to run and any user mode customization of the OS.

Containers are isolated but share the OS kernel of the host, thus are lightweight. However, each container must have the same OS kernel.

Containers are isolated but share the OS kernel of the host, thus are lightweight. However, each container must have the same OS kernel.

An important thing to keep in mind is that containers do not lift the Operating System dependency:

- containers can only run on the same machine if they depend on the same OS kernel

- the app running inside the container should be made to run on that particular OS

- the OS kernel has to support containers

Operating system level virtualization support has been in OS kernels for a long time, and the same can be said about container implementations. However, initial adoption was slow until publically available guest OS (Linux) and container implementations (Docker) offered access to developers in a friendly way. Ever since adoption has skyrocketed as containers enable very short application release cycles.

3rd generation platforms

While containers are great, they are really just the deployment artifact of a true 3rd generation platform. A complete platform requires the following essentials:

- infrastructure: ESXi, Hyper-V, bare metal (no hypervisor)

- container optimized OS: CoreOS, Photon, Ubuntu, Azure OS, Warden

- container packaging: Docker, CF DEA and droplets, zip + deployment manifest

- cluster scheduler: Kubernetes, libswarm, Mesos, Azure Fabric Controller, CF Cloud Controller, SCALR, RightScale

- management interface: Terraform, shipyard, Mesosphere, Apprenda, Jelastic,Azure websites, BOSH or Pivotal CF

The various platforms above are very much still in development. Platforms differ most by maturity, supported languages and/or runtimes (java/node/.net), the implied OS (Linux/Windows), out of the box stack integration, intended workloads (big data vs. enterprise apps), centralized logging, management UI capabilities, and maybe most important: community. Some illustrations of the evolving landscape:

- as it stands in almost all cases the constainer OS is some flavor of Linux, but Microsoft announced Docker engine support in the next server OS, and cloud foundry has alpha engine (BOSH) support for Windows in the form of IronFoundry.

- Docker is (becoming) the dominant container packaging artifact. To illustrate this, originally Cloud Foundry uses a different container construction technology based on detection of the bare application type (java/node) followed by a scripted, on demand construction of a droplet. The next iteration codename Diego will have support for various container back-ends including Docker.

Containers or VMs?

After seeing all the goodness containers can provide, it’s a natural question to wonder whether we still need virtual machines. After all, if we have OS level virtualization, why would we need to stack it on top of machine level virtualization? The answer is two fold:

- security: hardware level virtualization is much more mature, and hardware assisted. This provides a battle hardened level of isolation on top of software based container isolation. Moreover, specifying security policies such as firewalls at the hardware level can still be convenient. -virtualization suites such as VMware vSphere are not just a hypervisor. The extra functionality provided by for example the ability to dynamically distribute resources and deploy new machines from templates in minutes can still be a convincing argument in favor of hardware virtualization, also when using OS level virtualization on top of it.

Moving to the 3rd generation

Creating new apps for the 3rd generation platform is not rocket science. It does however require a different mindset on what a solution architecture should look like. Developers and businesses should both understand the (im)possibilities in adopting this new platform generation.

Developers: the 12 factor app

I already mentioned a limited set of characteristics of cloud native applications above. Adam Wiggins wrote a manifesto about the so called 12 factor app in which he describes the ingredients/factors for a proper cloud native app. Depending on the specific application, not all factors described are equally relevant, so the 12 factors should be considered as a set of best practices, not a law set in stone. However, developers writing new applications or migrating legacy applications to the cloud would do well to consult this document before taking any definite decisions.

All in or hybrid?

It’s always more easy to start with a clean slate. When you for example have a greenfield with some ESXi hosts, it’s relatively easy to install a 3rd generation platform stack and start hosting 12 factor applications. In practice however, most enterprises will have numerous legacy apps running just fine on (virtualized) hardware. Depending on the number and type of legacy apps in your organisation, it could be you want to go all in on the next generation and make a clean switch, or rather build a hybrid environment.

In the case of a hybrid approach you will have to identify the best candidates for early migration. To do this you can look at:

- application workload: what apps have a substantial workload which is in your current environment scaled up, not out?

- the work involved to migrate: stateless, modular applications are much more easy to migrate than stateful, monolithic ones

The reason why existing web applications are usually the top candidates is because when designed well they are stateless and modular. Therefore, an example of a hybrid approach is for instance to migrate specific web applications to the new platform, and keep existing databases on predetermined fixed hardware and provision them as a service on the 3rd platform.